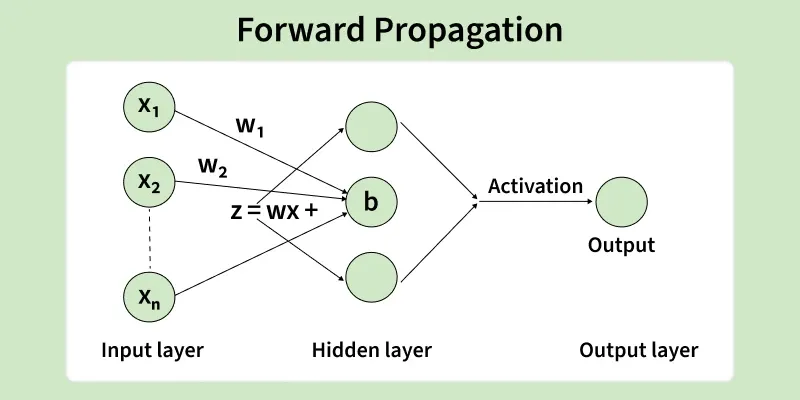

Forward propagation in neural networks is the process where input data flows through each layer of the model to generate an output. It’s the step-by-step computation that transforms raw inputs into predictions using weights, biases and activation functions. This operation forms the backbone of how neural networks learn patterns and make decisions.

Forward Propagation

- It computes intermediate values layer by layer, starting from the input layer and ending at the output layer.

- Each neuron applies weighted sums and activation functions to extract features.

- It is used during both training and inference, but without weight updates.

- The accuracy of predictions heavily depends on how well forward propagation captures patterns from the input data.

Working

1. Input Layer: The network begins by receiving raw data through the input layer. Each feature in the dataset corresponds to a neuron in this layer, allowing the model to read all required information. Before entering the network, the data is often normalized or standardized to ensure faster training and better stability.

2. Hidden Layers: The processed input then passes through one or more hidden layers, where most of the computation happens. Every neuron in a hidden layer performs a weighted calculation on its inputs and then applies an activation function to introduce non-linearity. The computation inside each neuron follows:

where:

- represents the weights

- is the input vector

- is the bias term

After this, an activation function such as ReLU or sigmoid is applied to produce the neuron’s output, which is then passed forward.

3. Output Layer: The final layer generates the model’s prediction. The choice of activation function depends on the task:

- Softmax → multi-class classification

- Sigmoid → binary classification

- Linear → regression

This layer converts the processed information into a meaningful output.

4. Prediction: Based on the current weights and biases, the network produces its final output. The prediction is then compared with the true value using a loss function which calculates the error and sends it to backpropagation for learning.

Mathematical Explanation of Forward Propagation

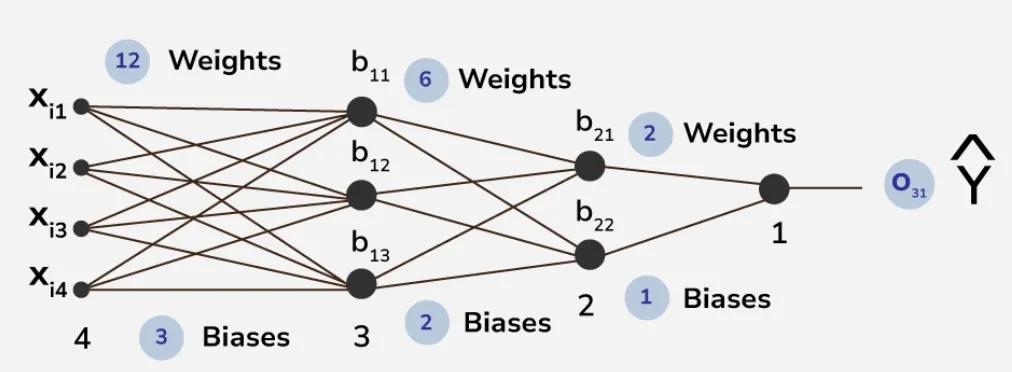

Consider a neural network with one input layer, two hidden layers and one output layer.

architecture of a neural network

1. Layer 1 (First Hidden Layer)

2. Layer 2 (Second Hidden Layer)

3. Output Layer

where is the final output. Thus the complete equation for forward propagation is:

This equation illustrates how data flows through the network:

- Weights () determine the importance of each input

- Biases () adjust activation thresholds

- Activation functions () introduce non-linearity to enable complex decision boundaries.

Implementation of Forward Propagation

2. Create Sample Dataset

- The dataset consists of CGPA, profile score and salary in LPA.

- contains only input features.

3. Initialize Parameters

When initilaizing parameters Random initialization avoids symmetry issues where neurons learn the same function.

4. Define Forward Propagation

- computes the linear transformation.

- Sigmoid activation ensures values remain between 0 and 1.

5. Execute Forward Propagation

Here we will execute the process of forward propagation using the above functions we created.

Output:

Final Output:

[[0.40566303]

[0.39810287]

[0.41326819]]

- Each number represents the model’s predicted probability before training for the given input.

- The values represent the sigmoid activation output which ranges between 0 and 1 indicating a probability like score for classification.